by Jessica Caporusso (York University)

As numerous reports have increasingly made clear, environmental data is at risk. Under the threat of a science-adverse Trump administration, researchers and members of the public are concerned that government datasets could be altered, manipulated or removed from the public domain—a move that could effectively undermine their efficacy in producing sound, empirical evidence-based policy.

What can be done to prevent the disappearance of vital, public datasets and websites? How can we actively resist such a future through creative collaboration? One possible solution is to archive vulnerable environmental data and program websites onto independent servers, such as the Internet Archive.

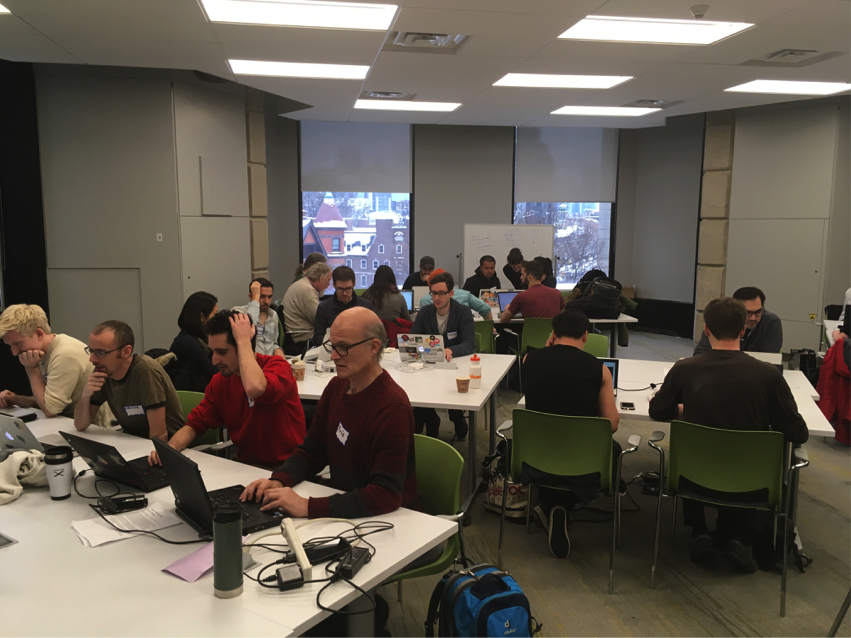

On December 17th, 2016, a collectivity of approximately 150 students, scholars, activists, coders and members of the general public did just this.

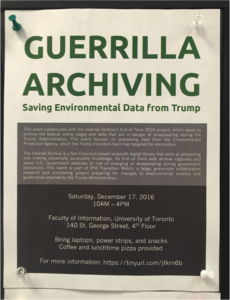

Despite the inclement weather, we converged at the University of Toronto’s School of Information to answer an important call-to-action. “Guerrilla Archiving,”—a grassroots archive-a-thon organized by the Environmental Data Governance Initiative (EDGI) and the Technoscience Research Unit (TSRU)—aimed to preserve vulnerable and valuable environmental data in a time of impending threats to environmental research. Spurred on by an increasingly hostile anti-science political climate brewing in the United States, the community devised strategies to identify, locate and seed data from the Environmental Protection Agency to the Internet Archive’s End of Term 2016 Project.

How did we do this?

Over the span of several hours, we co-developed a protocol and a politics grass-roots archiving. Volunteers self-organized into five teams: researchers were responsible for tracking and identifying vulnerable EPA programs and datasets; coders, including members of the Civic Tech TO community, created six tools and scripts for scraping data from the EPA website and fed URLs into the Internet Archive’s Webcrawler; protocol developers devised critical components of a procedural toolkit for future archiving events; ethnographers used qualitative analysis through thick description to document the entire process; and the social media team broadcast our efforts in real-time over several platforms, including Twitter (#EDGI, #GuerrillaArchiving, #DataRescue), Facebook, and Instagram.

How did we do?

The event successfully seeded 3142 URLs to the End of Term 2016 Project, and earmarked 192 vulnerable programs for archiving. Additionally, we drafted several key components for our toolkit that can be used in future events. More importantly, as praxis, Guerrilla Archiving sparked a grassroots conversation between scientists, coders, students, and members of the public—a conversation that provided both a lexicon and means by which to resist the prospect of losing irreplaceable environmental data. We were joined by colleagues from the #DataRefuge Project in Philadelphia and by EDGI-member Jerome Whittington from New York, who we are both hosting future events. The creative commons toolkit for events kick-started by the EDGI team will be available soon online through their websites.

Stay tuned for future calls to action and events to follow.

Environmentalists fear EPA data may change or disappear once Trump takes office. These hackers want to make sure it’s preserved. pic.twitter.com/H03ISV1Z8J

— AJ+ (@ajplus) December 21, 2016